Terraform’s external data source is a useful escape hatch when you need to call out to a script to fetch data that isn’t available via a provider. However, it comes with a sharp edge that often catches people out: Terraform expects the result to be a flat map of string → string values. If yourContinueContinue reading “Returning Complex Objects from Terraform External Data Sources”

Tag Archives: Terraform

Deploying Azure Purview with Terraform: Overcoming AzureRM Limitations

Microsoft shifted the Azure Purview to be Tenant aligned, but the AzureRM Provider hasn’t made that move yet, so if you are deploying your Azure infrastructure using Terraform you need to do some alternative changes to get it to work, especially when you’re trying to deploy Purview securely using private networking. After a few painfulContinueContinue reading “Deploying Azure Purview with Terraform: Overcoming AzureRM Limitations”

How to Secure Your Terraform State File in Azure

Terraform has become the standard for managing cloud infrastructure, and with good reason. It provides consistent, repeatable deployments and integrates with almost every cloud provider. But there’s one piece that’s often overlooked until it causes problems: the Terraform state file. Your terraform.tfstate file is more than just metadata — it’s the single source of truthContinueContinue reading “How to Secure Your Terraform State File in Azure”

Validating Azure APIM in CI: A Practical Approach to Safe API Deployments

When deploying any code you want to validate it as much as you can before deploying. However with APIs in the APIM you can’t validate the XML Policy or the API logic until it is deployed into the API Management Service (APIM). This means you are limited to the options to validate the code, beforeContinueContinue reading “Validating Azure APIM in CI: A Practical Approach to Safe API Deployments”

Manage Complex Terraform Lists and Maps in a CSV Format

When developing some resource in Terraform you develop a large complex map or list of entries. This can become hard to manage, difficult to read and worst to maintain. An easier method is to convert these items into a Comma Separated Values (CSV) file. This will condense something that could be 100’s of lines down toContinueContinue reading “Manage Complex Terraform Lists and Maps in a CSV Format”

Best Practices for Terraform Module Testing and Validation

Terraform Modules are great for isolated components you can reuse and plug-in to your main Infrastructure as Core code base. They can then also be shared and used by multiple other teams at the same time to reduce repeated code, complexity and increase compliance. However, as they are isolated, they are harder to test, maintainContinueContinue reading “Best Practices for Terraform Module Testing and Validation”

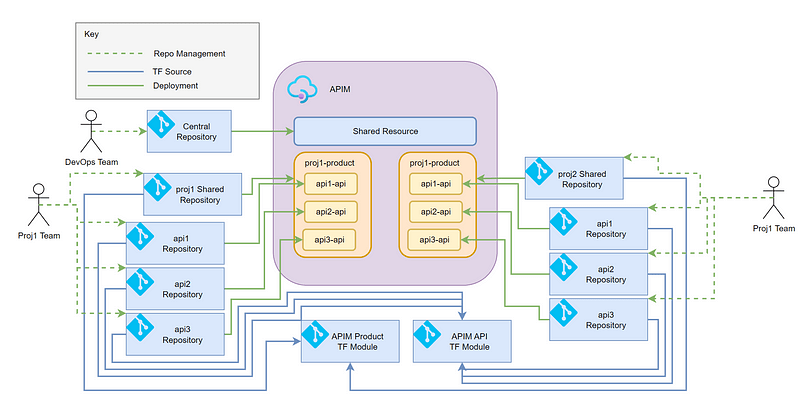

Shared Azure API Management Service Design

Azure API Management Services (APIM) are a powerful, flexible, and well-equipped product in Azure, but they are also expensive. There are reasons for this, and ways in general you can reduce the cost with SKUs, but another way is to share it with other products within your organisation instead of having a dedicated APIM. IContinueContinue reading “Shared Azure API Management Service Design”

Terraform Code Quality

Terraform is like any other coding language, there should be code quality and pride in what you produce. In this post I would like to describe why you should have code quality and detail some of the aspects you should be consistently doing every time you produce code. Code Quality is like when you areContinueContinue reading “Terraform Code Quality”