From March 2021 Azure is deprecating the Container Setting in Azure Web Apps, which changes you to use the new Development Center. This look very nice, but there is a change that is going to force you to have weaker security. This change is to have the Admin Credentials enabled, but there is something youContinueContinue reading “Automate Security for Azure Container Registry”

Tag Archives: Azure

Push Docker Image to ACR without Service Connection in Azure DevOps

If you are like me and using infrastructure as code to deploy your Azure Infrastructure then using the Azure DevOps Docker task doesn’t work. To use this task you need to know what your Azure Container Registry(ACR) is and have it configured to be able to push your docker images to the registry, but you don’t know that yet. Here I show how you can still use Azure DevOps to push your images to a dynamic ACR.

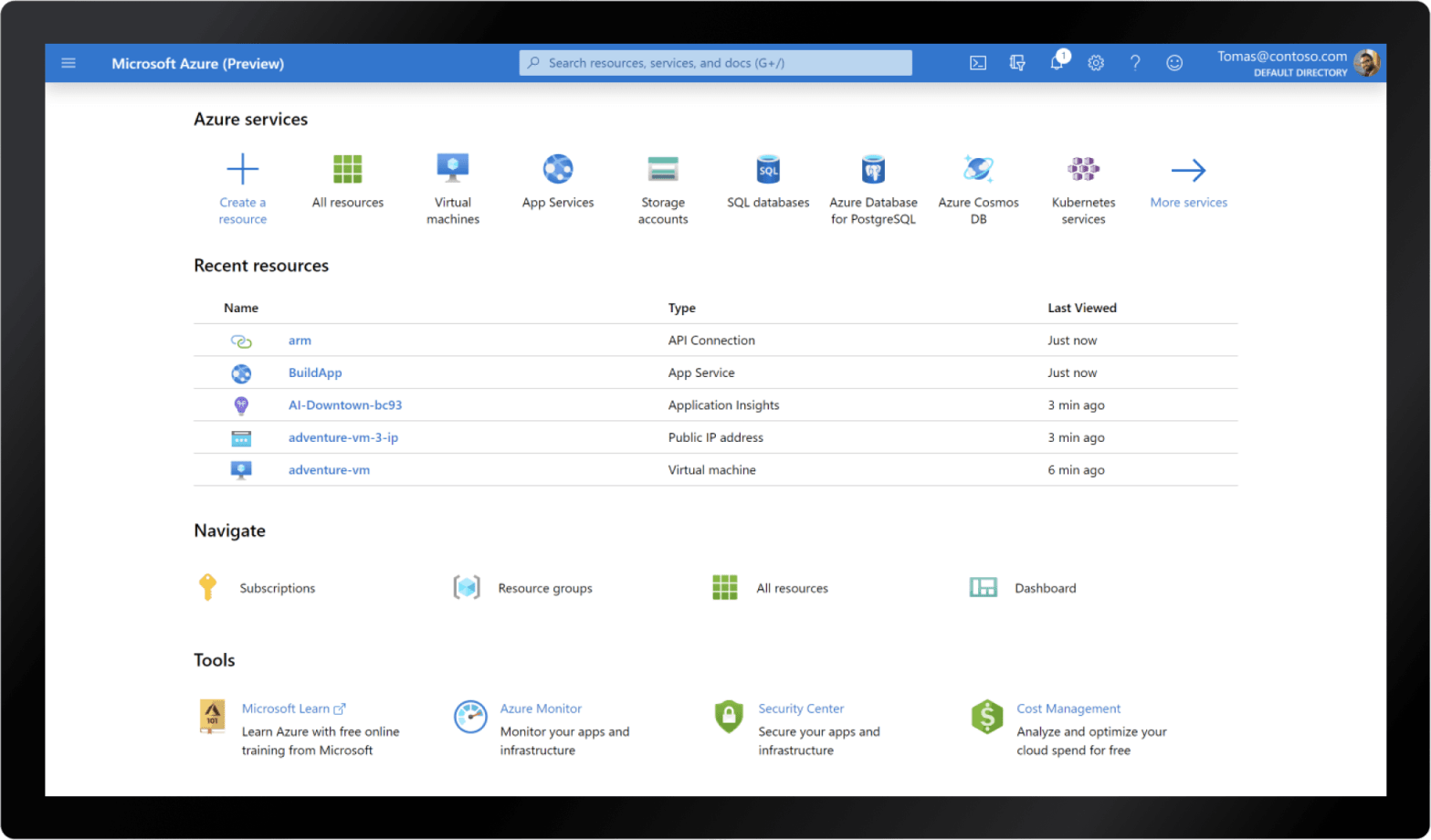

Where to find Azure Tenant ID in Azure Portal?

Some of the documentation about Azure from Microsoft can be confusing and missing, including one I get ask ‘Where is the Tenant ID’. Below I give 3 locations, which there is probably, on where to find the Tenant ID in the portal. I have also added how to get the Tenant ID with the Azure CLI.

Terraform remote backend for cloud and local with Azure DevOps Terraform Task

When working with Terraform, you will do a lot of work/testing locally. Therefore, you do not want to store your state file in a remote storage, and instead just store it locally. However, when deploy you don’t want to then be converting the configuration at that point and can get messy working with Azure DevOps. This is a solution that works for both local development and production deployment with the Azure DevOps Terraform Task.

Use Terraform to connect ACR with Azure Web App

You can connect an Azure Web App to Docker Hub, Private Repository and also an Azure Container Registry(ACR). Using Terraform you can take it a step further and build your whole infrastructure environment at the same time as connecting these container registries. However, how do you connect them together in Terraform?

Authenticate Terraform with Azure CLI

Sometimes there are no error messages and they’re not helpful at all, but sometimes there are error message which are helpful for your debugging of the issues which are the best thing ever. Then again this is only helpful if the error message points you to the correct problem to fix. I stubbled across an issue recently when I could not add a Secret to an Azure Key Vault via Terraform, which the error message did not help at all.

Azure DevOps Pipeline Templates and External Repositories

Working with Azure DevOps you can use YAML to create the build and deployment pipelines. To make this easier and more repeatable you can also use something called templates. However, if you want to use them in multiple repositories you don’t want to repeat yourself. There is a method to get these shared as I will demo.

Azure REST API Scopes

When working with the Azure REST API you need to provide the scope in all API requests, so Azure knows where you are looking. However, throughout their documentation that although they ask for the scope they do not explain or link to an explanation of what a scope is and what the formats are. Therefore,ContinueContinue reading “Azure REST API Scopes”