So you generate a PDF in the C# code or any back end code, but need to send it back to the JavaScript doing the AJAX request. The trouble is how you return a file back to the client side and is the same problem I faced. There are loads of methods of how to do this that I have seen on the line, but these are the ways I found worked with C#.NET and JavaScript.

Tag Archives: C#

How to merge multiple images into one with C#

Due to a requirement we needed to layer multiple images into one image. This needed to be faster and efficient plus we didn’t want to use any third party software as that would increase maintenance. Through some fun research and testing I found a neat and effective method to get the outcome required by only using C#.NET 4.6.

What is in a projects builds and releases

While working with other companies I have seen multiple builds and releases, plus also reading books like ‘Continuous Delivery: Reliable Software Releases through Build, Test, and Deployment Automation’. Through this I have learnt more and more about what should really be in the builds and releases of code applications. I would like to describe how I think they should both be used to create a scalable, reliable and repeatable process to bring confidence to your projects.

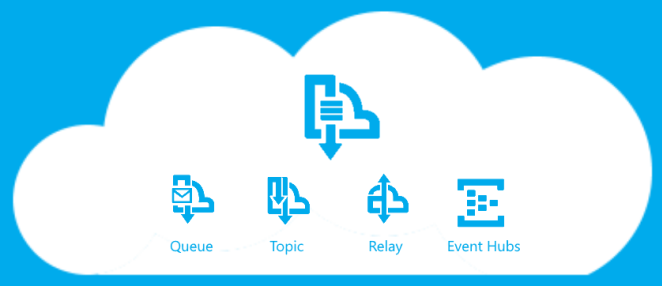

How to build Azure Service Bus Relay Sender and Listener?

This is one of them, I tried to do and found it hard so here is how I did it, post. I was assigned to look into how to build a Sender and Listener using the Azure Service Bus Relay, so we could send data from Azure to On Premise securely. Now there might be debates on is this is secure and compared to other methods, but that is not for what I was asked and what this post is about.

How to test a WCF service with Visual Studio Command Prompt?

It can be a pain sometime to test your web service and a longer process than wanted. I then found a method from the glorious web of how to use Visual Studio to test and thought I would share this with you.

How to get the users IP Address in C#.NET?

When you search this on your favourite search engine, (Google) you will get flooded by load of different way to get the end result. This is great in a sense as you know there are thousands of people with different answers, but then that is where the problem is at. There are so many single responses to how you can get the users IP Address in C#.NET, but not one that shows you everything. Therefore I will present to you below different methods to get the users IP and how I have implemented it in a project.

Kentico the mule of development

Over my time of working with such a CMS called Kentico I have found some pro’s and con’s of this mode of working, so I wanted to share my thoughts of if it is a favourable idea to be working on these CMS’

Guide to the Style Guide

This is about Coding Style Guides, what they are, if you should have one and if so then what one. I will mainly be following it through with JavaScript, but the knowledge can be transferred to other languages as it is the principle that I am looking at.