To connect an Azure MySQL Database, or other services in Azure, one of the most secure methods to do this is with a Private Endpoint. Microsoft document the architecture they recommend using an App Service connecting to a MySQL Server, which is good if you are using the Azure Portal, but there are some missingContinueContinue reading “Connect Azure MySQL to Private Endpoint with Terraform”

Category Archives: Networking

Setup Hyper Guest for SSH without IP Address

When setting up the Hyper-V Guest hosts, I found it a little tricky and hard to find documentation on how to easily set these up, so I thought I would share how I got them into a configuration with the most simple process. With this setup you can also SSH into the Guest Host evenContinueContinue reading “Setup Hyper Guest for SSH without IP Address”

SSH to Hyper-V Virtual Machine using SSH.NET without IP Address

I have uses the Dotnet Core Nuget package SSH.NET to SSH into machines a few times, is a very simple, slick and handy tool to have. However, you cannot SSH into a Virtual Machine(VM) in Hyper-V that easy without some extra fiddling to get an exposed IP Address. With your standard SSH command you canContinueContinue reading “SSH to Hyper-V Virtual Machine using SSH.NET without IP Address”

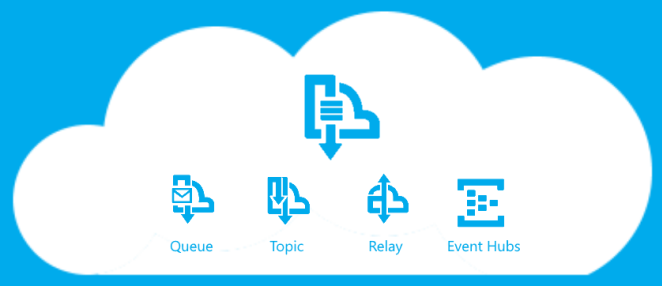

How to build Azure Service Bus Relay Sender and Listener?

This is one of them, I tried to do and found it hard so here is how I did it, post. I was assigned to look into how to build a Sender and Listener using the Azure Service Bus Relay, so we could send data from Azure to On Premise securely. Now there might be debates on is this is secure and compared to other methods, but that is not for what I was asked and what this post is about.

Dont be a fool and use the back up tools

Backing up your data is the most vital part of any business and individual person can do. This is the biggest must, but people still don’t think about it. Due to recent events I think it would be good to share my views and tips on the best solutions.

PRISM and snoopers

Just listening to a podcast and they were saying about governments reading your email and how they are trying to find ways to encrypt their data, so I just thought I would put my opinion.